Intel joins Tesla and SpaceXAI on $25B Orbittal AI Bet

A summary of the interesting content that I consumed this past week…

What I Read This Week: a summary of the content that I consumed this past week…

Caught My Eye…

1) Terafab: Intel Joins Musk’s $25B Bet on Orbital AI

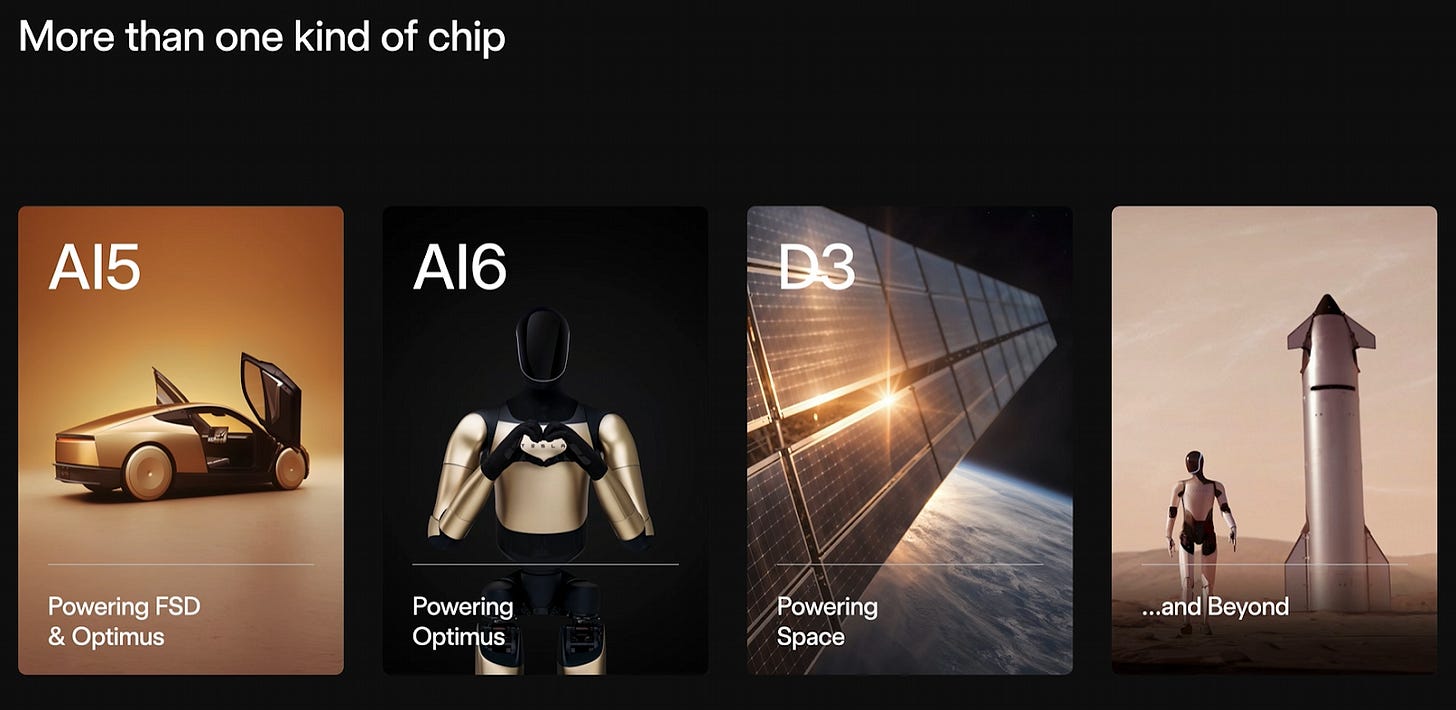

Elon Musk announced Terafab on March 21, a $25 billion chip fabrication joint venture between Tesla, SpaceX, and xAI in Austin. On April 7, Intel joined as a partner, contributing its 18A process node, a 1.8-nanometer-class technology, and the most advanced semiconductor manufacturing process within the United States.

80% of Terafab’s projected compute output is earmarked for a single chip called D3, a radiation-hardened processor built for orbital AI data centers. SpaceX has already filed an FCC application to launch one million data center satellites into low Earth orbit.

Musk reasons that AI workloads will be cheaper to run in orbit than on the ground within three years. Terafab’s structure reflects this split. One facility is dedicated to edge AI chips for Tesla Robotaxis and Optimus robots. The other focuses entirely on the D3 for orbital deployment.

2) Muse Spark: Meta Closes the Gap and Goes Proprietary

On April 8, Meta debuted Muse Spark, the first model from their Superintelligence Labs. It was built over nine months by a team led by Alexandr Wang, Meta’s Chief AI Officer. The new model is closed-source, signaling a shift in Meta’s frontier AI strategy. This is a notable break from the Llama playbook that helped make Meta the standard-bearer for open-weight AI.

Muse Spark performs competitively in multimodal perception, reasoning, health, and agentic tasks. For healthcare, Meta collaborated with 1,000 physicians to build Muse Spark’s clinical capabilities.

Meta says the model reaches Llama 4 Maverick-equivalent capability at 10x lower compute cost. The model now powers the Meta AI app and website, with rollouts to WhatsApp, Instagram, Facebook, Messenger, and Meta’s AI glasses planned in the coming weeks. Meta claims their models are scaling predictably and that Muse Spark is an early data point on the trajectory, with larger models in development.

3) OpenAI At $852B: Testing a New IPO Playbook

On March 31, OpenAI closed a $122 billion funding round at a post-money valuation of $852 billion, the largest private fundraising event in history. OpenAI was the fastest technology platform to reach 100 million users, and soon the fastest to 1 billion weekly active users (currently around 900M).

CFO Sarah Friar confirmed on April 8 that OpenAI will reserve a portion of IPO shares for retail investors.

“It has to be that everyone partakes, that it isn’t just that a very small group, and everyone else gets left behind.” - Sarah Friar CFO at OpenAI

In its pre-IPO private placement via JPMorgan, Morgan Stanley, and Goldman Sachs, OpenAI targeted $1 billion from individual investors and received $3 billion, described by those banks as the largest private retail placement they have ever executed.

OpenAI is heading toward a possible public filing in the second half of 2026 with a valuation nearing $1T. They project $280 billion in revenue by 2030, against $20+ billion annualized today.

Learn With My Friends and Me…

Other Reading…

Project Glasswing (Anthropic)

Half of Planned US Data Center Builds Have Been Delayed or Canceled… (Tom’s Hardware)

The detail that stops me is the FCC filing for one million satellites. Once the compute lives in orbit and the latency problem gets solved, every ground-based data center becomes a legacy asset overnight. Intel bringing 18A into this isn't a partnership announcement, it's Intel deciding which side of that transition they want to be on. RKLB and ASTS have been pricing this infrastructure buildout for months. The market is just now starting to read the same memo.

They should just do the power in space and beam it back down to the datacenter. You get the benefit of both worlds then. Cooling of earth and excess solar in space.